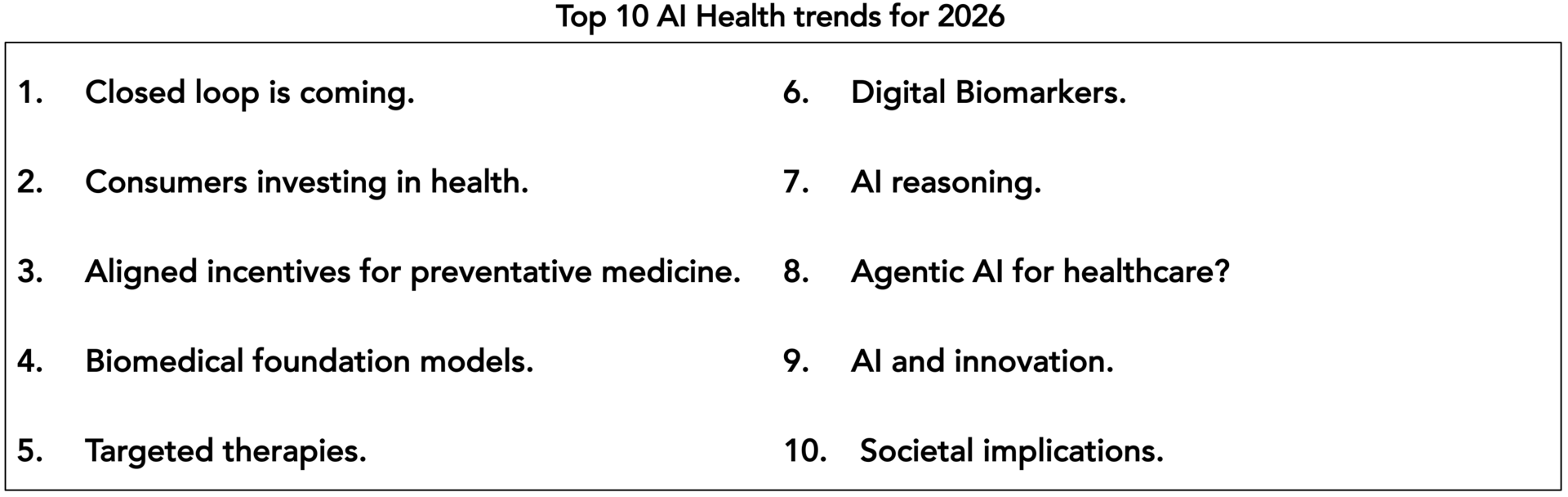

Growing up, the holidays were a time of lists. I still write one for Santa, albeit to no effect. And even as a kid, I always loved the new year’s top 10 lists for the year to come and the year we’ve just lived through. And AI is certainly something we are living through – but most of it lies ahead. As always, let’s consider how it can also be used to improve those lives. Here are the trends I will be keeping an eye on in 2026!

1. Closed loop is coming.

If Santa is bringing me one AI health present for next year, it will be insight into the human brain. Affordable, at scale, and in real time.

If AI is going to play Santa for people like my co-authors Szczepan and Brian and bring a gift for pharma, I think a tighter coupling of feedback to AI prediction & models.

In some sense the two are one in the same – closing-the-loop for ML systems. But this principle can manifest itself very differently, and this a great place to leave a few anecdotes as stocking stuffers for anyone reading.

In preventative health, this is how hyper-personalization happens – continuous adaptation - for what works best for you, whether exercise regimens, music or dietary recommendations. And this is also how you can measure how well a regimen is or is not working!

In pharma, models will be able to kick off experiments via API’s faster and more efficiently – testing predictions & improve model performance. This might be new compound structures for screening or optimizations, continually improving with each new cycle for a given target. But also could be closed loop feedback from users as recommendations or insights are injected into real world working situations!

2. Consumers investing in health.

Thanks to decades of data and studies, increased awareness through social media, and higher standards of living in many places, we are more conscious than ever about how our daily life NOW effects our immediate health and wellbeing as well as having major impacts in the future.

I personally started to suspect lifestyle made a difference after moving to Switzerland at age 30 and observing the occasional 80 yrs old Swiss racing past me up a mountainside.

Your daily and weekly routine matters, mentally and physically in so many ways. But for those of us just trying to get through life with family, friends, careers and without a laser focused obsession on sport or diet, how to better balance it all?

I’m not completely sure. BUT there are plenty of examples on this planet of digital and devices modifying our behavior for the worse, but also for the better, an obvious example are simple step-counters in my case.

And some might have more of a religious opinion on molecules and medicine or digital sensors and devices. But if we start viewing medicines such as the GLP-1 agonists as bridges enabling a healthier lifestyle, and digital behavior-modification as tools to stabilize and lock-in that healthier life, then the value of both is increased.

And increased value is always of interest to pharma as well.

3. Aligned incentives for preventative medicine.

I have written previously about mis-aligned incentives in healthcare. Many of us have felt it personally when dealing with reimbursement, waiting for specialists, or balancing work and long-term health issues or burn-out.

The latter is a great example. Mental health tools to reduce absenteeism deliver no direct financial value for a pure health system. De-risking long term cardio values through study-proven nutrition, movement, or de-stressing, does not lower this year’s health economic burden. Or does it and do they? And do such initiatives deliver value elsewhere?

By answering these questions - by connecting long and short-term returns with data driven observables and bridging timescales, we can help create confidence for stakeholders new and old, for investing time and money into prevention.

And we already see healthcare payers moving more into the provider space!

4. Biomedical foundation models.

If we had a biomed foundation model themed wall calendar, we would have one for every two weeks! There are over two dozen major foundation models, covering a half dozen different modalities. That can help you cover everything from pubmed to genomics data bases to histopathology.

I think this is particularly noteworthy for two reasons, the first of course is multi-modal approaches, and taking a more holistic approach to patient/biomedical insights. But also, the downstream finetuning of these foundation models can be thoughts of as transferring expertise from the world’s top specialists to junior doctors or GP’s for example – improved inter-rater consistency for digital pathology comes to mind.

And also, it also means that someone has been able to collect the necessary data to build these models in the first place, and often that data is publicly available. With training costs much less than the earth-shaking sums of the big name LLM’s.

5. Targeted therapies.

My favorite pure pharma trend this year is targeted therapies. And I think this trend heavily involves and will be enabled by AI and creates so many opportunities for data driven approaches and sequence optimizations – from protein engineering to neoantigens.

This one deserves a future post of its own!

6. Digital Biomarkers.

Moving from carbon & molecules to silicon & sensors, I believe digital biomarkers will have the ability to impact neuroscience in the same way that genome sequencing has done for oncology. There is ample evidence for early detection (dementia) and even capturing disease MoA for psychiatric indications. We will dive into some of these modalities in the very near future!

7. AI reasoning.

Reasoning models – you might have heard of terms/techniques like chain-of-thought - will have the ability to break complex tasks down into smaller more manageable chunks. And what’s pharma research (or even medical decision making) if not designing and entire corporate org structure and thousands of people around one ungodly complex task.

This is going to be very important, but it feels like the field is still searching for a pre-requisite idea or two.

8. Agentic AI for healthcare?

Or rather, reasoning + foundation models + agents = ?

Part of what makes reasoning so exciting is the opportunity to pair it with the earlier trend of biomedical foundation models. Just as we have our own specialty and domain knowledge, future agents will leverage the embeddings and output of the various bio foundation models and integrate the pieces.

One of the exciting thing about agents is the ability to learn how to work together in a given context to accomplish a given task, just like we do. But healthcare requires additional R’s: reliability, reproducibility, robustness, and regulation. I recently had the opportunity to discuss this with a friend of mine, Prof. Gaurav Chopra, who advocates for neurosymbolic AI and collaborative memory to help address these problems – and is a topic worth revisiting in 2026!

I would argue that we need to think about the future of “gen AI” in a more distributed and emergent manner.

9. AI and innovation.

Will AI help avoid innovation valleys of death or trap us in them?

And finally returning full circle back to innovation: we see a great wealth of opportunities for AI to improve care and accelerate innovation above.

But how do we move from making cool predictions from the data we have available to strategically solving our biggest problems?

Why have so many former colleagues said they hear and read about exciting AI everywhere, but it doesn’t really seem to help them in their day job?

Why have we had so many cool AI proof-of-concepts but few scaled solutions? Is this about to or already changing?

See Brian and Szczepan’s pieces from this month!

But let’s switch to a more fun form of this question to close it out!

10. Societal implications.

What will AI mean for us as individuals?

Is A.I. going to take all our jobs? Or will it make me better at my job? Will it make my job more mindless and meaningless or more interesting? What about start-ups - will all the innovation be concentrated among the few tech giants? Or will it enable countless small teams on the edge?

As a technology, AI will has the potential to undoubtedly increase our productivity as a society. How we choose to direct and distribute the increased productivity is up to us. Just like globalization and automation, it will make some specific functions obsolete. And while I hope we’ve taken a lesson on mitigating these effects given the recent populist backlash they’ve sparked, the effects are not yet at doomsday levels.

Recent studies by MIT Sloan suspect only around 10% of jobs are fully automated according to their iceberg index. It is more often the case that only specific tasks within an occupation can be automated. When AI automatable tasks are too concentrated, employment can fall but typically around 14%. And when a minority can be automated, employment often grows. What I found most interesting is looking at human-AI complementarity under MIT’s “EPOCH” framework (incl. empathy, ethics, creativity, leadership) for evaluating how human intensive - and not easily AI replaceable – various segments are.

In life science, I remain convinced the current wave of AI will augment our healthcare professionals and scientists, not replace them.

Regarding start-ups: at AI summits this year, there was often a discussion around centralization/de-centralization of AI and there seemed to be a consensus that it will be both.

There will be centralized control over the large foundation models – today the “LLM”s – and ability to train them if, for example, parameter sizes are maintained or increase.

However, I liked the anecdote, the “2 pizza teams” of today could be “one pizza” or just a handful of people in the future. The ability of AI to act as a software engineer will enable small innovative teams to deliver super scalable solutions and do more with less, encouraging an explosion of decentralized lightweight, and fast-moving innovation.

We will need to ensure that balance includes the latter. And to de-risk the societal impacts in general. But let’s close the thought with health. Regulation and de-risking is of course at the heart of biomedical research, pharma and healthcare.

What are the fears, worries, or simply biggest questions out there around AI in health: Will it make my care increasingly virtual and impersonal? Will my own genome and lab tests be used against me by insurance or employers? Will health innovation only benefit the wealthy, or will it have global impact for the many and reduce inequities?

I would love to understand from all of you out there what you feel are the most pertinent questions to dive into around AI and health!

After all, when it will be on many of us to help define the answers.