Let’s get one thing out of the way: I’ve lost count of how many times I’ve been pitched a “game-changing” technology that was supposed to fix everything that’s broken in drug development. Most of the time, these silver bullets turn out to be blanks. Still, every so often, something genuinely surprising happens—a new approach or tool actually moves the needle, or, at the very least, helps us see the problem a little more clearly.

Reading Brian Berridge’s piece, “The Tail is Wagging the Dog,” felt a bit like group therapy for those of us who have spent years watching the industry chase its own tail—designing solutions and then desperately searching for a problem to fit them, especially in the world of animal models. Brian’s honesty about the disconnect between what animal studies promise and what they actually deliver in the clinic hits home. It’s a story I’ve lived more than once, usually at 2 a.m., staring at data that doesn’t make sense and wondering where we went off course.

But here’s the thing: it’s too easy to point fingers at “bad models” or blame every failure on technology that didn’t live up to the hype. The real issue is more fundamental—and more fixable. It’s about how we define our problems, how we choose our tools, and, most importantly, how we combine them to actually answer the right questions.

So, in the spirit of Brian’s call for more honest conversations, I want to take a hard look at where innovation in drug development actually happens (and where it doesn’t). I’ll share a few stories—some messy, some encouraging—about how forward translation, reverse translation, and the right mix of old and new tools can make all the difference, but only when we stop letting the tail wag the dog and start steering with purpose.

The Animal Model Conundrum: Still Center Stage, But Why?

Here’s the uncomfortable truth—animal models aren’t going away anytime soon. They’re still the backbone of most preclinical programs, and for good reason: when they work, they really work. There’s a certain logic in using a living system to model another living system, and every so often, an animal study points us in exactly the right direction.

But more often than we’d like to admit, they don’t. I remember a project early in my career where we ran a beautifully designed study in mice, convinced we were looking at a sure-thing cancer therapeutic. The results were stunning—at least, in mice. In humans, the effect evaporated completely. We spent months chasing artifacts, looking for errors in execution or measurement. The answer, it turned out, was that our model simply didn’t capture what mattered most in the human disease. That disconnect cost us time, money, and maybe a chance at helping real patients.

This isn’t a knock on the people running animal studies—far from it. It’s an admission that biology is messy, that translation is rarely straightforward, and that our comfort with what’s familiar can cloud our judgment about what’s actually useful.

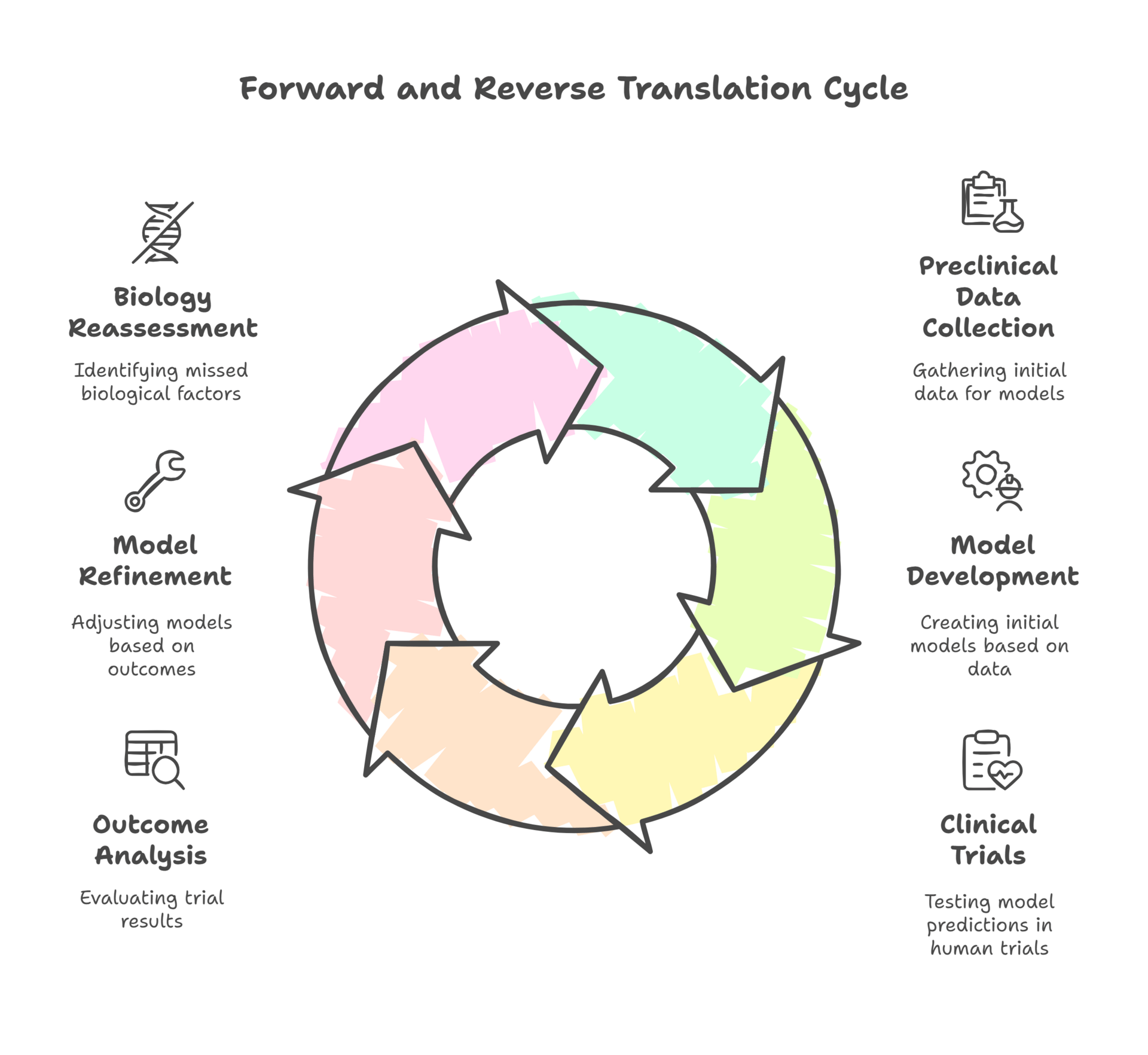

Forward and Reverse Translation: Building a Real Feedback Loop

Reverse translation isn’t glamorous. It doesn’t make for keynote slides or investor decks. But when we take patient data back into the lab, we stop pretending our models are better than they are and start making them useful. The most important scientific act isn’t building a shinier model—it’s admitting when the one we love just failed and asking what biology we missed. That humility is what separates science from theater.

Ask anyone in drug development about forward translation, and you’ll get a weary look. Making the leap from preclinical data to clinical outcomes is a high-wire act, full of pitfalls. I’ve seen promising signals in models dissolve the moment you move into human trials—not because the science was bad, but because the context was missing.

What’s often overlooked, though, is the power of reverse translation. A few years back, I was involved in a program where our first-in-human trial produced results that flat-out contradicted everything our preclinical work had suggested. Instead of sweeping it under the rug, the team made the (rare) decision to go back to the drawing board. We took the clinical data, dug into patient-level outcomes, and started retooling our preclinical models to better reflect what was actually happening in people. It was humbling, and it didn’t save the program, but it set us up for success in the next round. We didn’t just ask, “Why didn’t the model predict the outcome?” We asked, “What did we miss about the biology?” That feedback loop is still far too rare in this business.

The reality is, most organizations treat forward and reverse translation as one-way streets. The feedback that could improve the next model—or the next technology—never gets shared, and lessons learned are lost in the shuffle.

The Power (and Pitfalls) of Combinatorial Innovation

Nick Kelley also reminds us that AI is best seen as a complement to human judgment, not a substitute. In that sense, it lines up with my view that layering organoids, digital biomarkers, and AI only works if the question demands it, not because the tools are flashy.

From an investor’s angle, most of the waste isn’t in failed science—it’s in chasing artifacts. I’ve seen millions of dollars and years of runway vanish because a team refused to call a model’s bluff. That’s not bad luck; that’s avoidable burn. If you’re looking for the next investable edge, it isn’t another platform demo—it’s the companies disciplined enough to close the loop between clinic and preclinical. Reverse translation isn’t just good science; it’s good capital hygiene.

If there’s one thing I’ve become certain of, it’s that there’s no single magic bullet. The real breakthroughs I’ve seen in recent years haven’t come from tossing out the old for the new, but from layering and integrating tools in a way that lets each compensate for the others’ weaknesses.

Take one project where we combined organoids, digital biomarkers, and AI-based analytics. None of these approaches, on their own, would have gotten us to the right answer. Organoids gave us human relevance, digital measures let us capture subtle effects over time, and AI helped make sense of the noise. The trick wasn’t just using all three—it was asking a question that required all three to answer.

Of course, sometimes the combinatorial approach backfires. I’ve been in meetings where we threw every new technology at a problem and ended up with a mess of conflicting data and no clear path forward. The lesson? If you don’t have a well-defined problem, more tools just make more noise.

Defining the Problem—Before Chasing the Solution

As Nick Kelley underscores in his article, there’s no push‑button path to AI‑discovered drugs—what matters is using AI where it actually clarifies a decision rather than decorating a slide.

I can’t say this enough: too often, we let technologies drive the agenda, instead of the other way around. We chase shiny solutions, build PowerPoints, write big checks, and only then start looking for a problem to solve. I’ve been guilty of it myself, and I’ve paid the price.

One of the best lessons I ever learned came from a clinical development lead who, after listening to yet another pitch for a digital endpoint, stopped the meeting cold and asked, “What’s the exact decision we’re trying to make, and what’s stopping us from making it?” It was a simple question, but it cut through months of accumulated jargon and salesmanship. Since then, I’ve tried to keep my own “gut check” list:

Is the problem real, urgent, and shared by the team?

Will this tool actually change a decision, or just decorate a slide?

Who will own the results, and who will be accountable if they’re wrong?

If the answers aren’t clear, it’s time to rethink the approach.

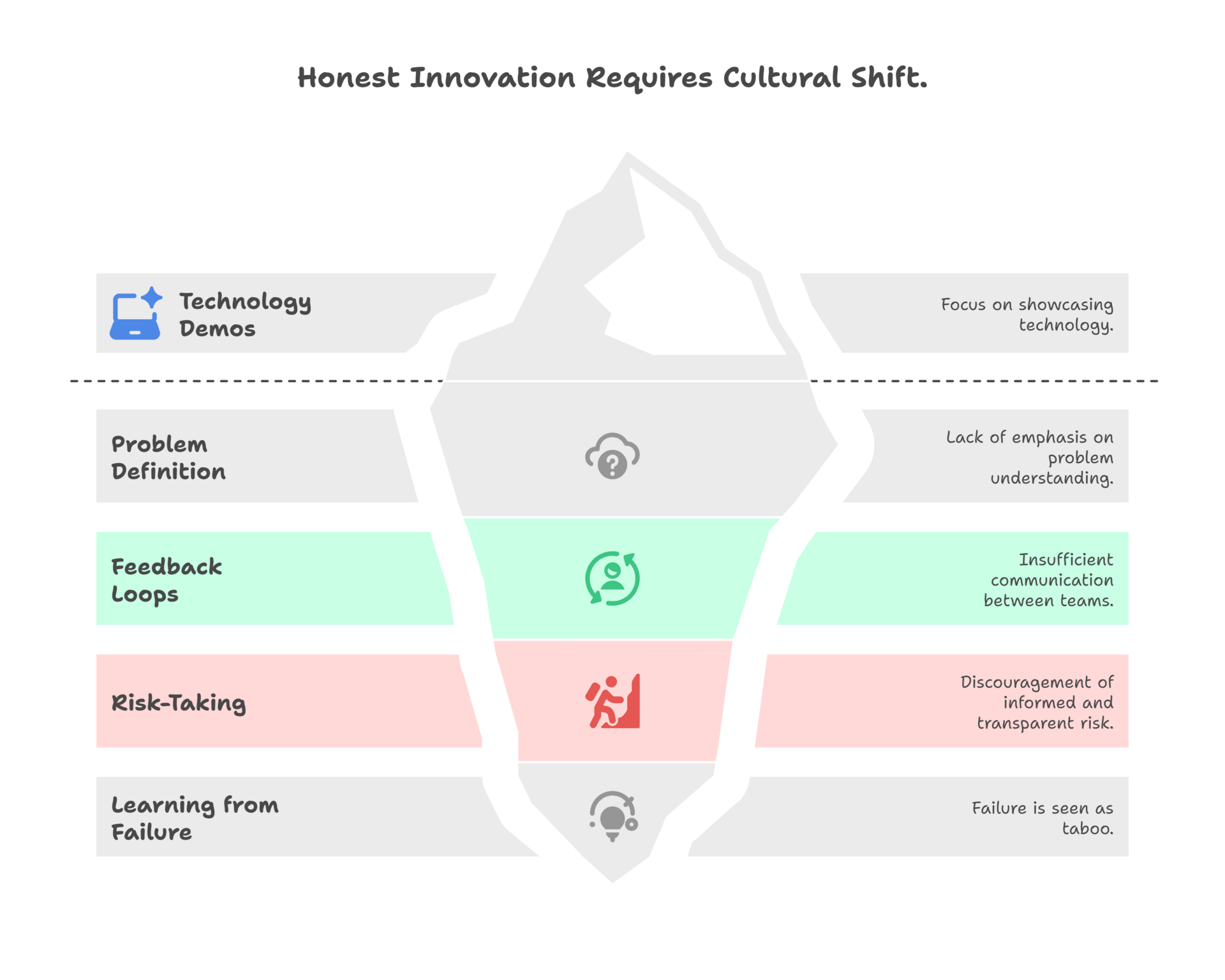

Toward a Culture of Honest Innovation

And to borrow again from Nick Kelley’s framing: efficiency dashboards don’t sway a P&L. Clarity does. That same principle applies beyond AI—whether it’s animal models, digital endpoints, or the culture that drives how we use them.

For leadership, the biggest lever isn’t the next tool—it’s culture. I’ve sat in meetings where a technology demo got more airtime than the decision it was supposed to inform. That’s upside-down. The real executive move is to reward teams for problem definition, for feedback loops, for saying “this model failed and here’s why.” Those behaviors look inefficient in the moment, but they save portfolios years and save companies hundreds of millions. Culture is not soft—it’s ROI.

So what would it look like if we actually built a culture that values process innovation as much as the next new gadget? For starters, meetings would be less about technology demos and more about ruthless problem definition. Teams would be rewarded for closing the loop between clinical and preclinical, not just for moving fast. Leadership would encourage risk-taking, but only if it’s informed and transparent, and failure wouldn’t be taboo—it would be expected, as long as we learn from it.

It’s not about being cynical or dismissive of new technology. It’s about demanding more from ourselves and our tools. If we keep asking the hard questions—and keep our eyes on the real problems—innovation becomes something we manage, not something that happens to us.

Wag the Dog, Don’t Let It Wag You

To circle back to Brian’s theme: are we letting the tail wag the dog, or are we finally ready to take the leash and set our own direction? My hope is that we’re getting closer to the latter. But hope isn’t a strategy. The only way forward is to keep challenging our assumptions, keep learning from our failures, and keep reminding ourselves that the goal isn’t to use more technology—it’s to solve the right problems, for the right reasons.

That’s messy, uncomfortable work. But if we’re not a little uncomfortable, we’re probably not innovating at all.

So, as we kick off this newsletter, here’s my challenge: let’s make this a space for the stories that usually get left out of the conference talks and the glossy pitch decks. The missteps, the surprises, the hard-earned lessons—those are where the real innovation happens, and that’s what we’ll keep tackling here. If you’ve ever found yourself questioning the status quo or wrestling with an unexpected result at 2 a.m., you’re in the right company. Stay with us—share your own stories, argue with us, push the conversation forward. If you’re hungry for the unvarnished truth about where science and technology meet (and sometimes clash), you’ll want to see what’s coming next.

Join us and your colleagues and let’s keep making innovation uncomfortable—together.