So, it’s safe to assume that Gen AI will start “generating” new medicines soon too, right?

Alas, there will be no push button solution for new “AI drugs” anytime soon – at least not in the traditional sense.

Let’s think about why. But also about how AI will and is helping in drug discovery and development. And what exciting developments to expect from the next round of innovations.

Discovering new drugs is incredibly complex, has lots of organizational stages, has lots of data silos, and often requires new biological knowledge – especially causality. And the integrated decision making includes everything from unmet medical needs, to commercial viability, to clinical feasibility, to simply translating the latest papers into research settings. Hence a single AI platform is currently not sophisticated enough for a push button approach – not from existing data, not from the automatic creation of new data, not even from the level of depth decision making requires. (not to mention the magnitude of some of the decisions, both financially and ethically)

But I would argue AI can, should, and will help with all of this. Furthermore, the developments at the leading edge of tech should help expand where and how AI can be used. This includes breaking silos by chaining models together, integrating up and down stream decision making by bringing constraints and insights into optimization and selection, speeding up iteration cycles through better and unbiased predictions, and reducing the chance of failure through more optimal molecules, patient populations, and study designs.

A good example – and one not to be confused with push-button solutions - are the low hanging fruit one might call “almost drugs”. Clinical trials fail, drugs get shelved, and we all know existing drugs may yield benefits in additional indications via biological cross-talk, network redundancy, or perhaps pleiotropy if one wishes to sound fancy. These often well-defined problems provide opportunities for very focused data driven solutions.

Failed from efficacy? Maybe it was the wrong population - leverage EHR’s with biomarker data or mine publications to better define a patient cohort and pull signal from noise to rescue a drug and move towards the next phase.

Failed from safety? Do the same, but with chemical assay data to alter the chemical structure or add cell line specific delivery and improve the safety profile. (nonetheless, easier said than done)

Additional indications? Leverage anything from RWD to scientific publications to find evidence of benefit in novel indications.

note: “Repurposing” a drug might seem like a push button approach for a new drug, but it is a very special situation and well defined task, which is what AI is great at.

Look under the hood and find real-life people:

But what about the typical vanilla pharma situations? Drug discovery and development can be conceptualized as one long chain of well-defined tasks in some sense. So if we look under the hood, we can start by enabling the actual people responsible for these ideas/decisions/designs. Not just to do their jobs slightly faster for the ubiquitous “efficiency gains”, but to become “better” at them. So let’s think about the ways AI/ML is complementary to humans, and how this plays out in pharma.

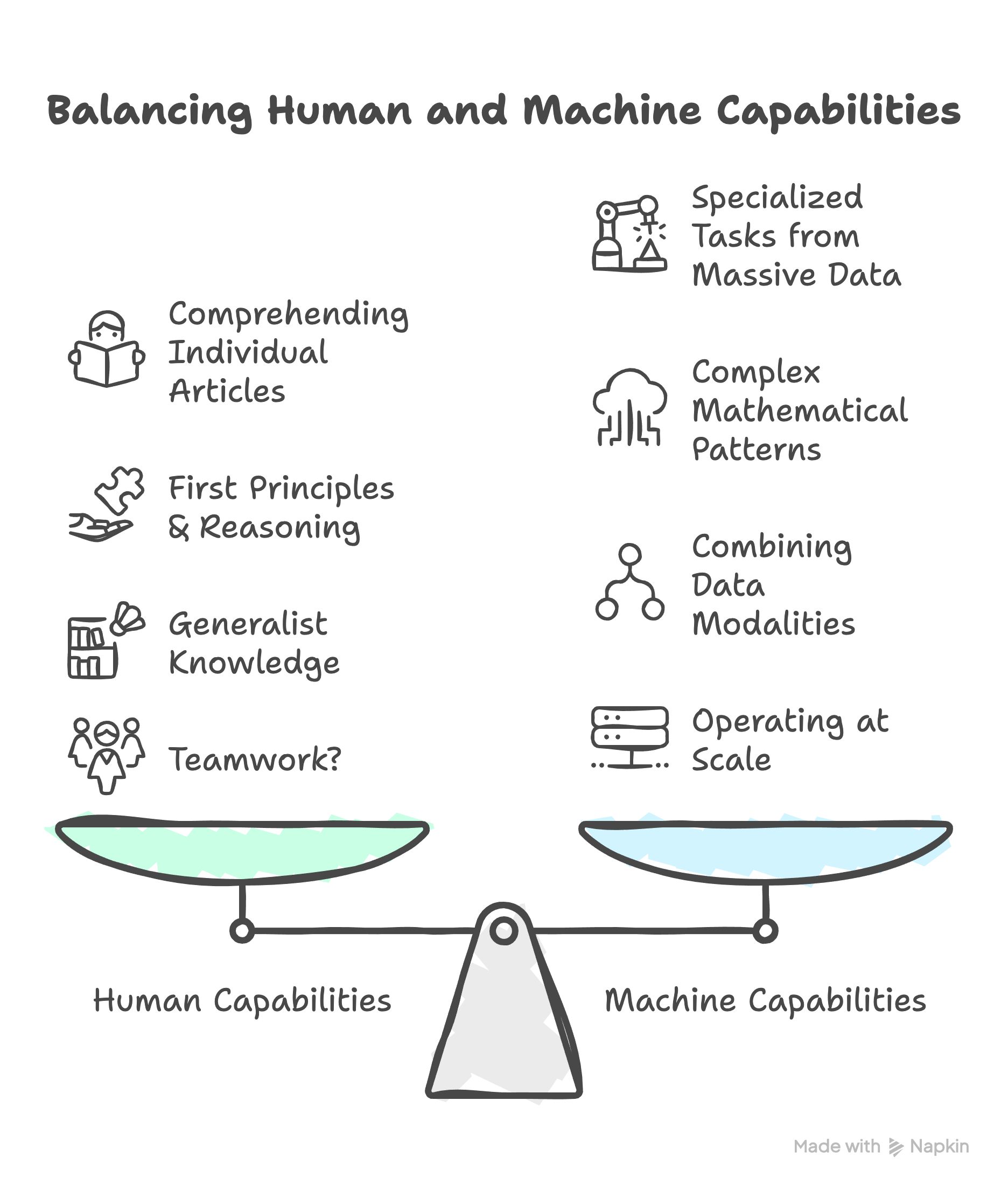

AI is complementary to humans:

Access larger amount of data – e.g. read more publications, more similar biomedical images

Easily learn complex features: mathematical relationships from general known examples (with sufficient data) and transfer to other very specific (low data) tasks

Multi-modal: combine different types of data (dr’s notes & images) by leveraging the features in 2, the “embeddings”

Operate at Scale: deploy to larger amounts of data and large amounts of users, in effect transfer knowledge from senior doctors/scientists to improve performance and skill of those less experienced

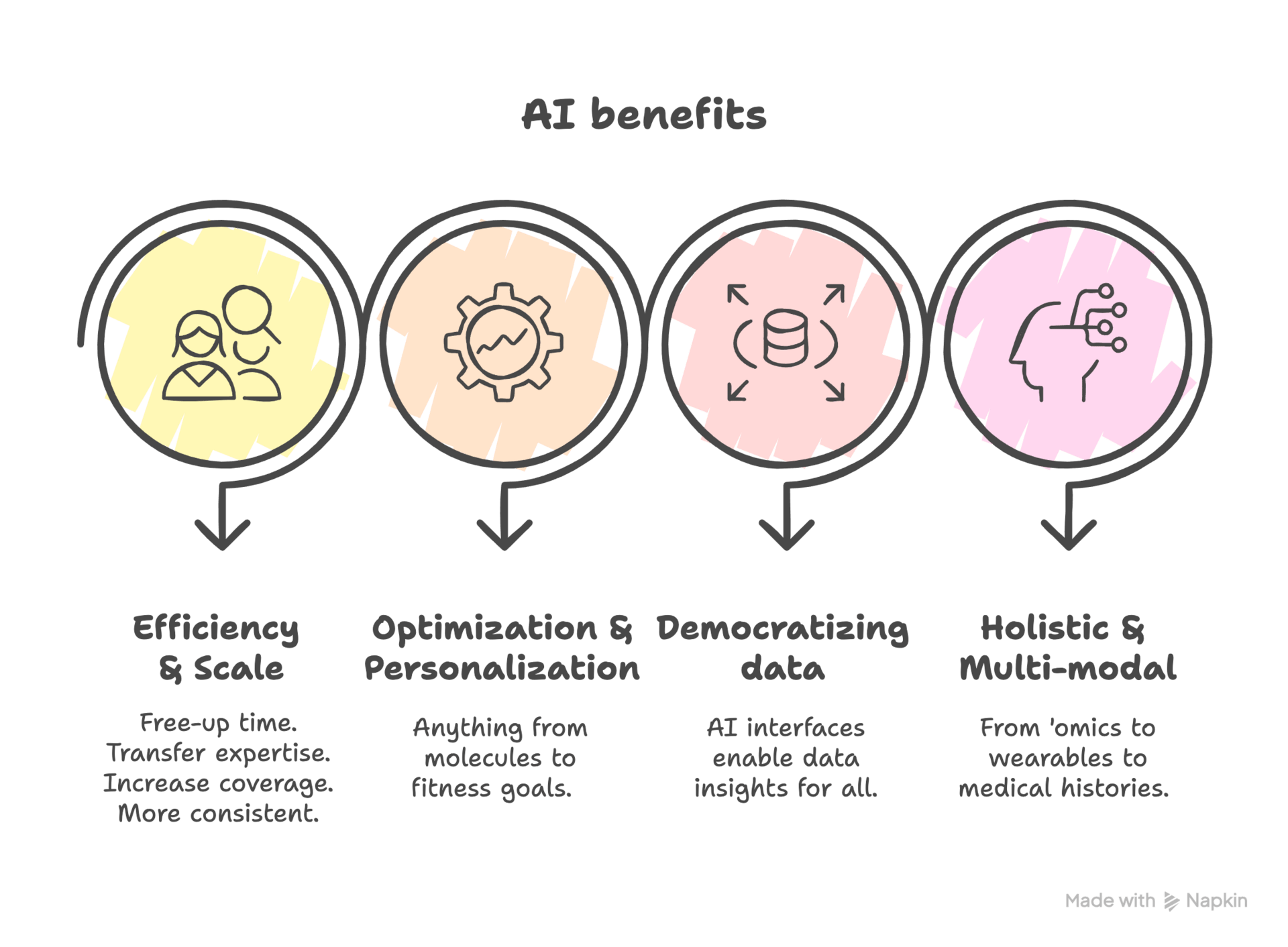

Efficiency: we are in the age of LLM’s, e.g. generate first drafts, get helpful suggestions/critiques to save time later, translate your questions into data queries

To be fair, it can be argued that we humans can still best our machine counterparts when it comes to reading and understanding the implications from a single article, elucidating first principles and causal relationships – or simply put, the scientific method! - and are better generalists for adapting easily to different contexts. (hopefully the list goes on and on!)

So which areas can most benefit from such complementarity? Let’s think about the areas where AI can help reduce time, increase success or catch new ideas – all of which are already happening!

Learning complex patterns from high dimensional data:

e.g. Geneformer – transfer learning from ‘omics to better predict network biology

Hypothesis Gen:

Target ID and compound-target predictionsi: match a disease signature (e.g. knock out) vector to a compound response vector (e.g. drug seq) in one’s favorite abstract embedding space, e.g. FRoGS

Information synthesis: e.g. structure information from PubMed and literature in an intuitive way for humans to explore predicted connections (benevolentAI, causaly)

Scale & transfer expertise between professionals, improve consistency:

Improve inter-rater consistency in pathology, find a pathologist similar images through content based retrieval, catch subtleties humans miss, or help train new doctors by bringing the models trained on world experts and getting them more quickly up to speed (portrAIt)

Better complex optimizations:

Let’s take med chem: providing unbiased suggestions for lead optimization – moves in chemical space less familiar to a specific chemist, and potentially brining additional down-stream constraints for things like formulations, etc (useful overview).

Democratize data:

Clinicians and trial planners can leverage LLM’s to ask questions of data – that they might have already paid for! - rather than publications: where patients are distributed and how many, what sites and which doctors would be ideal? Can we accelerate recruitment? Based on past submissions, are there any risks in our clinical trial protocol? (here is a recent post from a friend).

Multi-modal for more holistic understanding of biology and patients:

Bring, say, biological information into chemical decision making or vice versa. E.g. use structural embeddings from things like “alpha fold” to bring new information into compound-assay activity predictions. (will the drug bind to its target and have the desired effect). This could perhaps help integrate virtual screening with in-silico lead optimization as illustration.

AI will also bring different sorts of continuous information and evidence around safety, efficacy, and personalized insights – in particular quality of life – to new digital/diagnostic tools and therapies. We’ll dive into this one in particular next time.

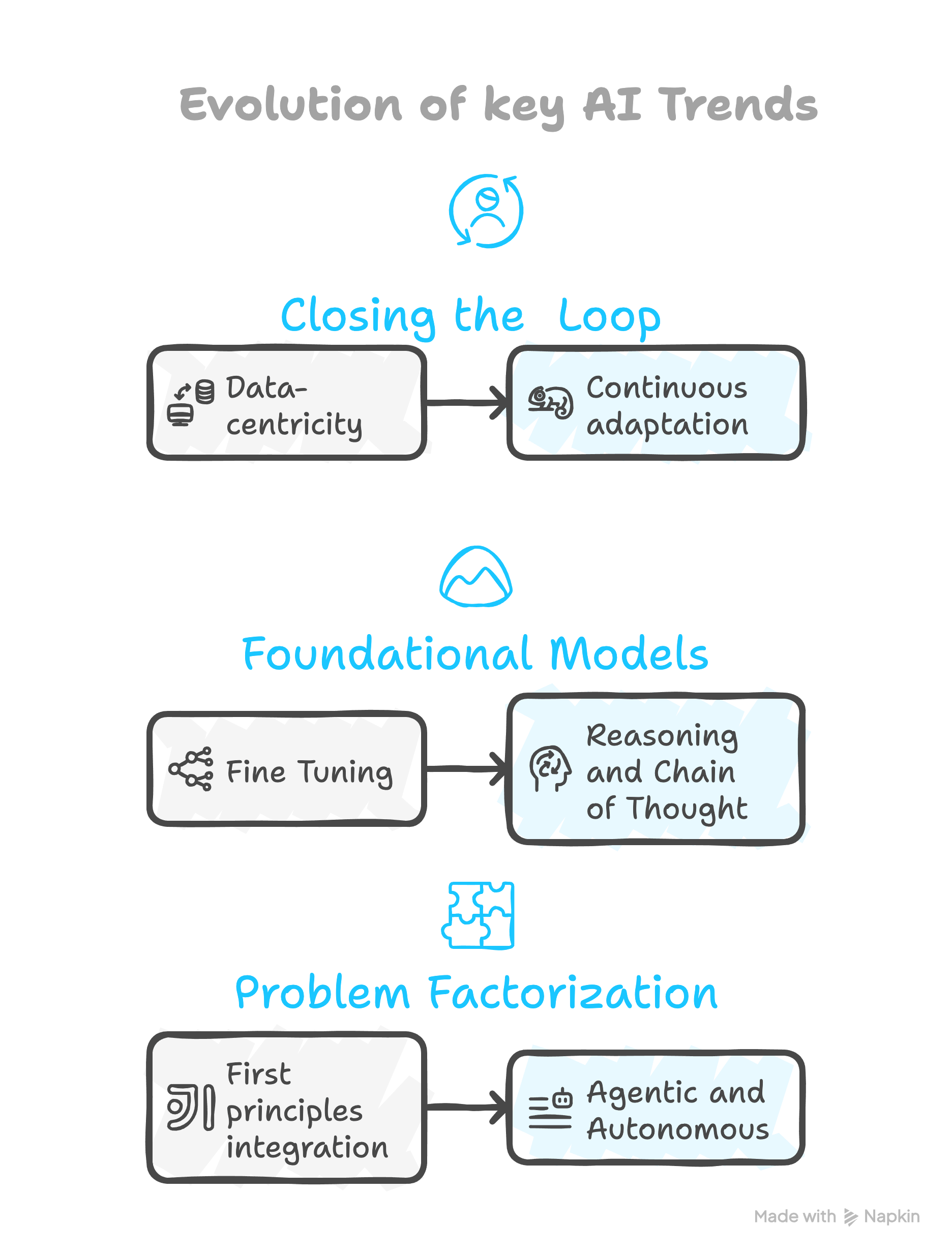

Additional AI trends to watch for in life science:

In the current version of AI, what are some of the current themes for applying AI at the leading edge of life science?

“Data-centric” AI (of which Prof. Ng is an advocate) will be a core theme:

A larger amount of in-silico predictions (think protein sequences, compound structures, new targets) will be tested through both lab automation as well as more computationally expensive simulations. (see first principle)

This will not only help insert AI into human workflows, but can be used to reduce model uncertainty or iteratively improve local activity models throughout the course of a project

“First-principles” Breaking the problem down and combining ML with scientific knowledge or first principles: For example, integrate the underlying mechanistic knowledge with the immense pattern recognition capability of ML. Using PK/PD as an example, think about the mechanistic “known parts” of PK/PD modelling in combination with a data driven neural ODE approach for the “unknown parts” like individual variability.

“Low data ML & Foundational models” doing more with less is always popular given the high dimensional nature of biological data. The trend towards foundational models will continue, facilitating the transfer of general learnings derived from the large amounts of public data from varying contexts to the interpretation of smaller context specific data generated for a given purpose. These models can be fine-tuned for manifold downstream tasks and use cases, potentially even combining modalities.

Towards the future.

What article is complete without a little futurism sprinkled in!

Let’s spend a moment around the major developments at the cutting edge of pure AI as far as it pertains to life science.

Whereas some small handful of years back, any form of super-human intelligence seemed out of reach and decades away, the incredible pace of progress around LLM’s now has experts (see: pretty much every panel discussion I witnessed this year) worried about how quickly it might arrive and the implications for when it does. To quote Eric Schmidt (Google former CEO), all of the ingredients needed are there, we are just missing an idea.

The two dominant themes right now are reasoning and agentic models, and I would argue both are critical in life science and drug discovery.

Reasoning models will break down complex processes into smaller steps, or a “chain of thought”, designed to perform logical deductions and will make headway towards causality, obviously critical to scientific discovery. And if you think about the “problem” of creating a new drug, we’ve basically already discussed how pharma has already broken down the process as a whole into smaller teams and business units within the larger organization, and consequently into smaller, specialized everyday tasks as well.

Agents: This not only echos some of the motivation behind reasoning, but also leads us mentally to the rationale behind agentic AI, if you think about the many different “people agents” interacting with each other, passing information, making deductions and guiding decisions to accomplish a task, each with very specialized knowledge in the case of pharma where the task is creating a new medicine.

Evolving closed loop:

“Continuous adaptation” A favorite recent example worth mentioning is liquid neural nets, elegantly blending aspects of 2 & 3 above together, levering ODE’s to offer more descriptive neurons able to be trained on less data, and adapt and generalize in real time due to their “fluid” handling of time constants and incorporation of causal structures. Anywhere from protein directed evolution to achieving personal fitness goals.

Towards “Scale-free”: Data is ubiquitous throughout biomedical research. Mathematics, statistics and data interpretation are always core activities. Consider that AI is already better at math than your “average person” and has now started to perform mathematical proofs. In fact, it is an intuitive example of scale free AI. There are examples of pairing an AI system that can conjecture, creating novel mathematical ideas or guesses, with another system designed to generate the mathematical proof - “scale-free” due to the minimal investment needed for closed loop iterations aimed at expanding mathematical knowledge. That said, leveraging such tools for guiding human intuition seems to be the current way of advancing mathematics. Extending upon this, it is not difficult to extrapolate this concept to physics/chemistry/biology. Picture a string of agents or models that make predictions – whether protein sequences or novel drug targets – tightly coupled to agents that can validate these predictions via experimental automation or first-principle simulations.

So to understand the next wave of intelligence, at least initially, I am seeing a consensus that we need to think more in terms of how we all work together collectively in our everyday lives as “agents” to solve complex problems, as well as how we break problems down in our own heads.